- Retrait gratuit dans votre magasin Club

- 7.000.000 titres dans notre catalogue

- Payer en toute sécurité

- Toujours un magasin près de chez vous

- Retrait gratuit dans votre magasin Club

- 7.000.0000 titres dans notre catalogue

- Payer en toute sécurité

- Toujours un magasin près de chez vous

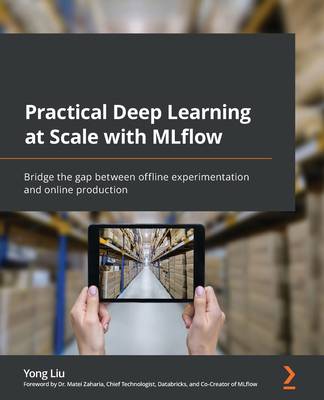

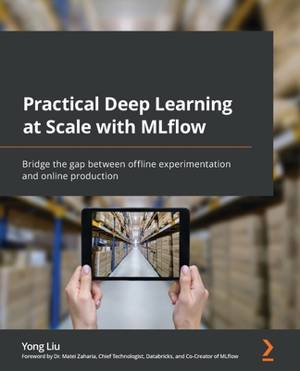

Practical Deep Learning at Scale with MLflow

Bridge the gap between offline experimentation and online production

Yong LiuDescription

Train, test, run, track, store, tune, deploy, and explain provenance-aware deep learning models and pipelines at scale with reproducibility using MLflow

Key Features:

- Focus on deep learning models and MLflow to develop practical business AI solutions at scale

- Ship deep learning pipelines from experimentation to production with provenance tracking

- Learn to train, run, tune and deploy deep learning pipelines with explainability and reproducibility

Book Description:

The book starts with an overview of the deep learning (DL) life cycle and the emerging Machine Learning Ops (MLOps) field, providing a clear picture of the four pillars of deep learning: data, model, code, and explainability and the role of MLflow in these areas.

From there onward, it guides you step by step in understanding the concept of MLflow experiments and usage patterns, using MLflow as a unified framework to track DL data, code and pipelines, models, parameters, and metrics at scale. You'll also tackle running DL pipelines in a distributed execution environment with reproducibility and provenance tracking, and tuning DL models through hyperparameter optimization (HPO) with Ray Tune, Optuna, and HyperBand. As you progress, you'll learn how to build a multi-step DL inference pipeline with preprocessing and postprocessing steps, deploy a DL inference pipeline for production using Ray Serve and AWS SageMaker, and finally create a DL explanation as a service (EaaS) using the popular Shapley Additive Explanations (SHAP) toolbox.

By the end of this book, you'll have built the foundation and gained the hands-on experience you need to develop a DL pipeline solution from initial offline experimentation to final deployment and production, all within a reproducible and open source framework.

What You Will Learn:

- Understand MLOps and deep learning life cycle development

- Track deep learning models, code, data, parameters, and metrics

- Build, deploy, and run deep learning model pipelines anywhere

- Run hyperparameter optimization at scale to tune deep learning models

- Build production-grade multi-step deep learning inference pipelines

- Implement scalable deep learning explainability as a service

- Deploy deep learning batch and streaming inference services

- Ship practical NLP solutions from experimentation to production

Who this book is for:

This book is for machine learning practitioners including data scientists, data engineers, ML engineers, and scientists who want to build scalable full life cycle deep learning pipelines with reproducibility and provenance tracking using MLflow. A basic understanding of data science and machine learning is necessary to grasp the concepts presented in this book.

Spécifications

Parties prenantes

- Auteur(s) :

- Editeur:

Contenu

- Nombre de pages :

- 288

- Langue:

- Anglais

Caractéristiques

- EAN:

- 9781803241333

- Date de parution :

- 08-07-22

- Format:

- Livre broché

- Format numérique:

- Trade paperback (VS)

- Dimensions :

- 190 mm x 235 mm

- Poids :

- 498 g

Les avis

Nous publions uniquement les avis qui respectent les conditions requises. Consultez nos conditions pour les avis.