- Retrait gratuit dans votre magasin Club

- 7.000.000 titres dans notre catalogue

- Payer en toute sécurité

- Toujours un magasin près de chez vous

- Retrait gratuit dans votre magasin Club

- 7.000.0000 titres dans notre catalogue

- Payer en toute sécurité

- Toujours un magasin près de chez vous

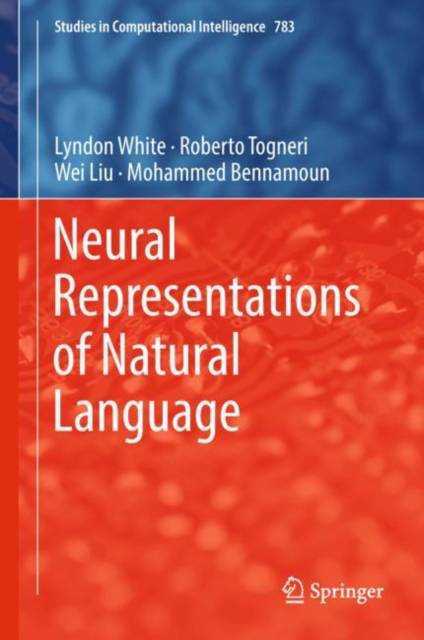

Neural Representations of Natural Language

Lyndon White, Roberto Togneri, Wei Liu, Mohammed Bennamoun

97,95 €

+ 195 points

Format

Description

Enriches readers' understanding of how neural networks create a machine interpretable representation of the meaning of natural language

Includes an introductory chapter on machine learning, allowing novice readers to quickly understand how it is revolutionizing the field of natural language processing

Highlights mature commercial and open-source implementations available, providing key tips for readers implementing the reviewed techniques themselves

Spécifications

Parties prenantes

- Auteur(s) :

- Editeur:

Contenu

- Nombre de pages :

- 122

- Langue:

- Anglais

- Collection :

- Tome:

- n° 783

Caractéristiques

- EAN:

- 9789811300615

- Date de parution :

- 18-09-18

- Format:

- Livre relié

- Format numérique:

- Genaaid

- Dimensions :

- 156 mm x 234 mm

- Poids :

- 371 g

Les avis

Nous publions uniquement les avis qui respectent les conditions requises. Consultez nos conditions pour les avis.